Content Auditing was one of my first struggles when I started doing SEO. Most of the information online is theoretically correct but lacks the depth and actionability we need.

For this reason, I’ve prepared this ultimate guide to show you what you can expect from an actual content audit.

Google results show techniques and methods that can’t work for huge or complex websites or actual SEO work.

If you want an up-to-date resource and a reliable framework, you are in the right place.

This framework was tested on both B2C and B2B websites and ultimately applies to any website with the right tweaks.

Table of Contents

What is a Content Audit?

A content audit consists of assessing the performance of your content to evaluate which actions to take next.

It sets a course of action for the website and its content strategy, based on past performance.

The word “past” is central because we are measuring what has already happened, so we are not predicting anything.

For this reason, content auditing makes much more sense on websites with some historical data and domain history.

The radar chart above summarizes my thoughts:

- Actionable since it needs to be implemented

- Data-informed because you consider data first

- Tailored to your website

- SEO-oriented isn’t the 1st priority as you include other data

- Low on Cheap

- Average on Short because you may even need 10-20 pages

The ideal length of a content audit is per se controversial since anyone will tell you a different value.

It doesn’t matter as long as the audit is implemented and the other party can understand it.

Requirements & Tools Required

I have a standardized approach to auditing because SEO problems are always the same. The most important things to have are:

- Google Search Console API access

- Google Analytics API access

- A crawler/scraper

- Backlinks data

- Info about content processes

- Financial data related to the website

- Custom data (Author, Date, Goal of the page, Comments, Ratings, etc.)

I will go over each of them to let you understand why each point is important.

N.B. Business owners can easily get access to all of them. It’s important to collaborate with SEOs and providing the minimum amount of data is an important step.

Having access to a Content ID per article is another way to use metadata to boost your content auditing efforts!

Google Search Console (API but ideally BigQuery)

It allows you to find 1st-party organic data that not even paid tools such as Semrush and Ahrefs can have.

This is the gateway to audit every website because it’s only organic performance. You can easily check technical issues about crawling and indexing but also get too much data for free.

The interface is limited as it’s capped at 1,000 rows, making it unsuitable for analysis.

A quick way to do so is by using Search Analytics for Sheets, a useful Google Sheets plugin to pull API data.

For more complex use cases (and if you don’t want to pay), I recommend using Python/R and use scripting.

On the other hand, the API gives you what you need to do audits, including the opportunity to aggregate the data as you prefer.

For the long-term it’s better to use the GSC data inside BigQuery because you get much more and you store the data.

Google Search Console is the way to measure organic performance and a goldmine of untapped queries.

Google Analytics 4 (API but ideally BigQuery)

The ultimate way of getting data about other marketing channels and one of your best friends if you have set up custom events.

Again, the API is needed to ensure you get more data and for ease of integration.

In SEO, Google Analytics 4 comes after Google Search Console in terms of usefulness but it’s still superior for omnichannel performance.

You can’t delete content because you have no or low clicks, as you may want to check other channels first or the engagement.

Google created a handful Google Sheets plugin that covers the majority of use cases for websites.

For more complex use cases, you need to rely once again on scripting.

It’s even more needed to use BigQuery here since GA4 raw data unlocks superior insights and gives you more flexibility.

A Crawler/Scraper

Auditing content often involves checking internal links and extracting categories to group articles. All of this is possible with tools such as Screaming Frog or Sitebulb, that cover most use cases.

Without them, you preclude yourself from a lot of meaningful information involving the structure of your website (or blog section).

Crawl data are part of the data sources you need to use to ensure you have a meaningful audit.

This information can then be used by tools such as Gephi to analyze the linking structure of a website.

This evergreen article by Patrick Stox is one of the many that explain how to use Gephi for SEO. You don’t need it for every single audit but it’s a nice addition for complex use cases.

With the advance of LLMs, you could even use a Python library like Crawl4AI to scrape a website and get all the content in .md files.

This isn’t done to check connections or links but to gauge the content.

Backlinks data

While backlinks are not traffic metrics, they can tell you which pages may add more value to your website.

This is extremely valuable information when you decide to prune pages because you may want to think twice if you have a lot of good backlinks.

Semrush, Ahrefs and Majestic provide you with backlinks data but in reality, you need to pay for their APIs.

This can be pretty expensive and I recommend checking the backlinks only for those pages that you want to prune.

Content Processes

This is where things get a little bit different from the norm. No one and I say no one mentions processes and systems in their audits.

As mentioned in the definition of auditing, we set a course of action for later. To take action, you need resources, including people, who work in a certain way.

Without this knowledge, your recommendations will be pointless and no one will execute them.

I love asking a set of questions before starting new projects and this is something I learned by doing.

There is no point in recommending something that can’t be implemented in practice.

A practical example is as follows:

- Client has a content team of 6, including 1 editor

- Your audit suggests updating 300 pages

- You split the work into batches of 50 pages per month

- 6 months of work

- The possible bottleneck would be the 1 editor

With such a large content team, it’s pretty much feasible, if we assume they won’t publish any new content or have additional capacity.

Reality is quite different from theory, so you must always consider organization when giving recommendations.

Financial metrics

Content audits don’t have to be purely SEO-related. People care about money and seeing numbers to evaluate opportunities.

Getting your hands on the cost of content is the best way to get buy-in and do some basic estimates.

This estimate sits nowhere and this is why I recommend you keep track of how much you spent per piece of content.

Then, you can even attribute value to a single page based on some criteria like assisted conversions (GA4 is fine).

Content pages don’t have revenue since you aren’t selling anything on that page, you have a CTA linking to a product/service, in case.

One could argue that without logins you can’t even properly attribute revenue, which would be correct!

What doesn’t work in standard content audits?

The mainstream SEO industry practices are severely limited because most of the content out there is a copycat.

This lack of innovation and experimentation leads to stagnation and sterile results.

The main factors I have detected are:

- Ignoring business problems

- Focus on SEO while disregarding other channels

- Standard procedures (homework approach, aka you follow a checklist)

Content Auditing & Analytics: The Missing Link

Auditing involves crunching a lot of numbers and Analytics is the ideal partner in crime for it.

Complex problems may require different solutions, including coding for more computational power.

If you have a 10,000-page website, it’s unthinkable to use spreadsheets or produce meaningful results without the right stack.

Analytics also helps you to set up the correct priorities and show you what has happened in the past.

For big websites, Analytics is a must because it’s the only way at our disposal to analyze data. Unfortunately, not many people invest in it because the industry doesn’t talk about it.

My Stack For Content Auditing

Since Seotistics is all about data, you should expect my stack to be focused on this to leverage what websites have.

My stack is as follows:

- Google Sheets

- Airtable

- Gephi

- Python/R/SQL (not tools but languages)

- Screaming Frog

- Google BigQuery

You almost don’t pay for this stack which allows you to dramatically cut costs.

Some basic automation with Python/R and Google Sheets allows you to bypass many paid tools that do the same things.

Screaming Frog + Gephi is ideal for analyzing internal links and figuring out what’s going on.

For larger websites, you will rely on cloud solutions and SQL but the idea is the same.

The Content Auditing Process

I have elaborated a v1 of the framework to approach content auditing from a data-informed perspective.

It takes into account not only SEO but also the needs of the website, something that you don’t see often.

I split the entire process into 8 steps:

- Gather requirements

- Collect data

- Clean your data

- Analysis

- Insights

- Communication

- Execution

- Prevention

With LLMs, you can simplify communication and execution, which is great.

You shouldn’t replace analysis with LLMs because the output isn’t replicable and predictable.

Ask the right questions

Many SEOs start with answers, an Analyst should always start with questions. Prepare a list with the most common questions you have and ask like crazy.

You can think of it as a requirement analysis, meaning that:

- Why is the audit necessary?

- What do they want to achieve?

- What they would like to see in the final report?

- What’s their capacity?

Data Collection

Now that you have some idea about the needs, you can start looking for the best data you need.

As I will discuss in the article about SEO data sources, you may want to learn how to use APIs or specific tools to simplify your work.

You don’t need to become an engineer for that, you simply need a way to get the data you need as fast as possible.

What you need was listed previously in this article too.

Data Cleaning

Once you have gathered all of your data, it’s some time for good old cleaning. You must not proceed further without first cleaning your data and assessing its quality.

Data make the difference between success and failure, not the models you use or your SEO expertise alone.

You need tasty raw ingredients to prepare a great dish. The cleaning process can be quite long depending on the website you are dealing with.

Fortunately, there are some general rules that apply to any website out there:

- Filter out pages with # since they are sitelinks

- Remove queries in non-Latin alphabet (or viceversa)

- Filter out all pages belonging to tags, categories, authors, pagination

Think about this step as reducing the noise. A noisy analysis won’t give you any benefit and that’s why you should spend time cleaning.

Data Analysis

This is where the magic happens. Here you actually analyze the data and spend time on finding insights (if any).

This won’t be the longest step but one of the hardest for sure. This is where you can make a difference and avoid being like others.

Many mistake a good analysis for something complex, when it should just solve the problem in the most efficient way.

Most of this process is covered inside my Analytics for SEO ebook and course because it’s too long.

I will provide here a short summary of what you can use:

| Metric | Explanation |

|---|---|

| Unique Query Count | Assess which pages have more opportunities. You can also use it to measure progress over time. |

| Content Decay | Metric used to show which pages have been losing traffic. It can be expressed in terms of any traffic metric. I recommend using clicks (SEO) or Users (Omnichannel). If under Advanced Consent Mode, use Views instead. |

| Page Group | Split your pages into groups based on their organic performance. You can select any metric you want, including: > clicks > impressions > time spent on page |

| # of Internal InLinks and Outlinks | From Screaming Frog or your favorite crawler. You need this to evaluate whether a page gets internal links or if it’s linking to other pages. |

More information on this topic are available on my Analytics for SEO Ebook.

Query Level

Analyzing queries is necessary to find golden nuggets in the least amount of time possible.

2 activities come to my mind when talking about queries:

- N-gram Analysis

- Keyword Clustering

N-gram analysis focuses on detecting the most common patterns on your website. This is useful for finding what best describes the queries you have in Search Console.

With a little bit of filtering, this exercise uncovers opportunities for new content ideas and possible anchor texts.

The code below is an example you can use from Search Console data (API) to do a more advanced operation.

The only big downside of this approach is that n-grams are computationally expensive and should be avoided for large websites.

Keyword Clustering is a famous method you can use to group keywords together and save a lot of time.

I talk about it in my dedicated article where I built a prototype for keyword clustering.

If you properly filter your keyword list before starting, you have some great data that can be used to find new content opportunities.

Page Level

Analysis on a page level is my favorite activity and one of the most granular tasks in the auditing process.

Giving specific advice to each page is impossible for large websites but you can quantify quality or group by performance.

The 2 main tasks are:

- Content Decay

- Classifying pages

Content Decay has its own article due to its importance.

Classifying pages is done based on performance, meaning that you can pick some criteria and use them to label your pages.

Some common criteria include:

- 0 clicks and low impressions

- 0 clicks and high impressions

- Combinations of clicks, users, sessions, time on page and internal links

Anything goes as long as you are able to split articles into mutually exclusive groups that make sense.

Cluster Level

The most loved type of analysis is on a cluster level because it allows you to see the big picture.

Which clusters/sections are performing better than others?

To have access to this plethora of information you must have labeled your pages, something not all the owners do.

If you have a clear and defined URL structure, you can define categories based on it. Unfortunately, this isn’t often the case and you have to use alternative approaches.

The picture below shows you a basic grouping you can do in Google Sheets and the interpretation of several metrics.

If you are starting a new website, I recommend you do this simple exercise with Airtable, the best content management software out there.

I simply add a column called Cluster where I tie every page to 1 or more clusters. Then, you can join this data to Google Search Console/Analytics to group by cluster.

If you haven’t done it initially, don’t worry, you can use LLMs.

Scrape the pages on your website, along with the keyword they rank for (top 5 by clicks).

Then, you can simply classify them in some groups you decide.

You should get a decent content database now!

Site Level

Analysis on a site level is the most misleading and misunderstood of them all.

Many bad audits focus on sitewide metrics that add no value and don’t tell much. Analysis on this level is abstract and can be dangerous without the right actions.

As an example, the average Bounce Rate of a website is pointless as it doesn’t elicit any action and it’s a misleading measure.

As a rule of thumb, avoid using average metrics such as position or bounce rate to describe a website. They don’t say anything about the situation but provide noise.

Instead, provide interesting and quick insights, such as:

- X% percent of the pages get 0 clicks

- X% of pages don’t get internal links

- X queries can be targeted as opportunities

You can also measure the % of anonymized queries (inside GSC BigQuery) purely to understand how much you are missing out.

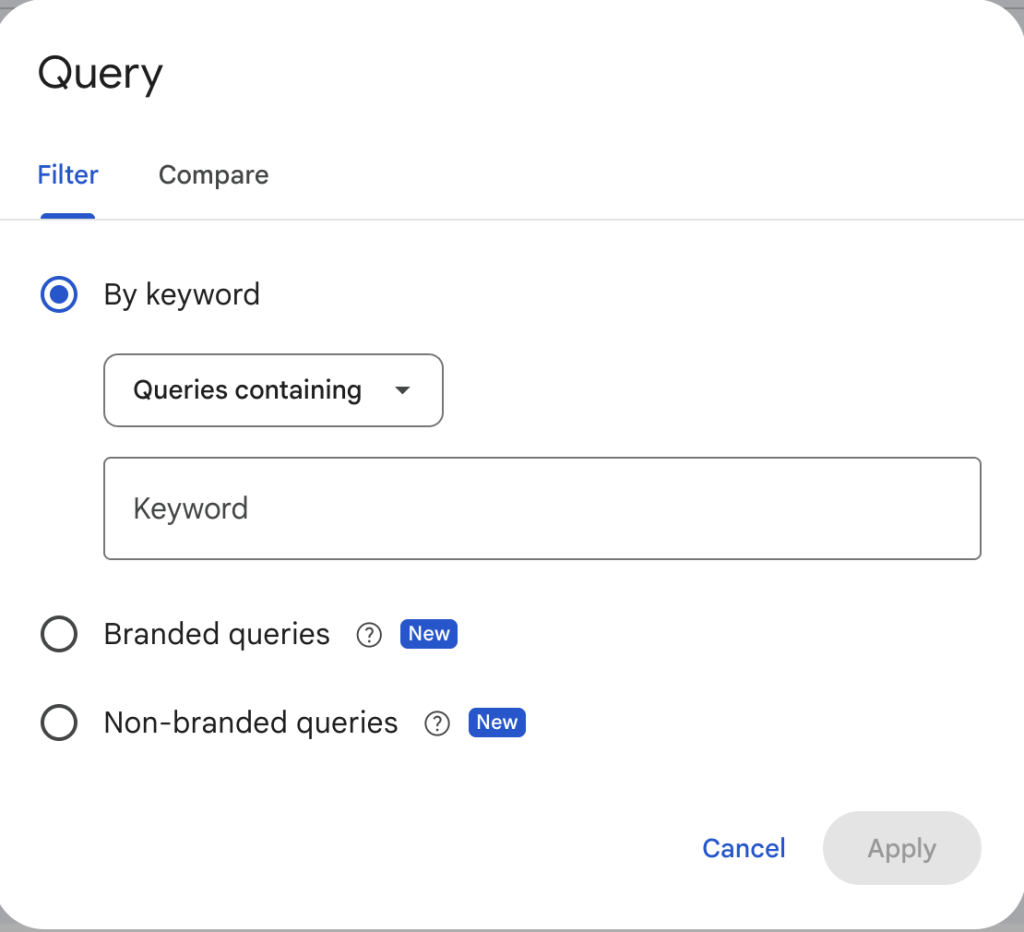

Exclusively to the interface of Google Search Console, now we also know the exact split of branded vs non-branded queries:

Plus, you could also report on the % of traffic brought by AI:

While this isn’t perfect because of consent and people not clicking + attribution, it’s better than nothing.

Insights

This is where you also make a difference because it’s your goal after analyzing data. What actionable insights can we use?

This is the pivotal point of an audit because, without insights, you are simply writing a checklist.

Sometimes, you may not find interesting insights, but it can happen. If you gather all the data I told you, this is very unlikely and you are almost guaranteed to spot interesting patterns.

Not every insight is necessarily relevant to the business, so it may not bring the desired results.

Insights are useful if and only if:

- They are actionable and lead to something practical

- They are relevant to the business/website and make sense for your context

If it’s not actionable, then you are wasting everyone’s time.

Your insights must be tied to some action, as illustrated above. The best way to make an audit more digestible is by drawing a connection between insights and actions.

Communication

This is the part where you communicate to the stakeholders and present your insights (hopefully).

Presenting data can be considered a different discipline altogether but here is some advice:

- Use visual content

- Dumb it down

- Less is better

- Add an Executive Summary

- Add a Recap at the end

What I listed above applies to both text and presentations or even Loom videos. The important is clearly communicating what was found, why it matters and what to do next.

The picture above is an invitation to read one of the best books about DataViz out there that can help you in presentations.

The Big Idea

One or two sentences where you explain the main insights. You have to craft a short but explanatory sentence to inform the stakeholders.

For example:

“My analysis found that 50% of the pages get 0 clicks, affecting negatively the quality of the website. We may consider several actions based on the checklist I prepared.”

3-Minute Story

If you had only 3 minutes for a story, what would you say? Probably not much and that’s the goal!

3 minutes isn’t much time and a short story containing all the salient events can boost your reporting superpowers.

In SEO, this translates to connecting the dots of rankings and business impact, as well as feasibility.

Data is your glue to make these connections, as long as it makes sense.

Bullet Lists

Executive summaries work because they are simple lists. Prepare a bullet list of the main insights and limit yourself to 1 sentence per each.

Use numbers if possible and try to make it as skimmable as possible.

While many fantasize about long reports, entrepreneurs/CEOs don’t have the time to read jargon.

Execution

Actually doing the job is the last step of the framework. You may say “but Marco this is not part of the audit!” and you’d be 100% right.

However, applying what you recommended is also part of the audit and you should think about execution during Step 1.

There are many flavors to execute an audit and I haven’t even explored them all. Based on my past experience, I can detail some of them.

Common Actions

You may wonder what are some of the most common recommendations to improve pages.

I stated before that you don’t actually need to map every single page to a specific action, you can split them into groups and then decide.

There are cases where you can afford to give specific advice, though.

Some actions I’ve tested and I recommend are:

| Action | Reason |

| Add more content | Be sure that your content is the actual best out there. |

| Remove outdated information | Google (often) knows if something is outdated for some queries. |

| Push Internal Links | Relevant internal links do affect crawling and ranking. |

| Adapt content to new search intent(s) | Users’ preferences change over time and if you notice intent shifts, it’s time to change your article. |

| Prune | Some pages will be pruned due to their lack of relevance and thin content. |

| Merge | Pages targeting the same queries with the same intent can be merged. |

| Multimedia enrichment | Add videos, infographics and original pictures. Yes, that’s also part of the Search Experience. |

You can also add a column for priority when giving site-wide advice.

More in general, what you do should always fit into the Content Distribution & Repurposing framework:

Instead of just improving pages on a website, you have to consider the whole content strategy and processes.

Prevention

Executing the plan isn’t enough, a website needs to understand its most common problems and capitalize on them.

This is where Prescriptive Analytics comes into play, as I outlined in my article about the Analytics Framework.

If you want to differentiate yourself and be someone who thinks long-term, understanding recurrent issues is your #1 priority.

How the output looks like

The output can change a lot depending on the budget and complexity of the website.

In general, I like to think of:

- Google Docs/Notion – Written Report

- Google Sheets – Spreadsheet with numbers, pivots and small charts

- Loom – Explanatory videos

- Airtable – Content Plans/Automation

Actual audits should be different and customized, never use stuff from the Internet without editing!

Difference with others

The average content audit usually is SEO-oriented and nothing wrong with it.

In practice, you may want to consider more data and get a general overview that considers other channels.

If you try to google content audits, you will get more of the same stuff, based on tools and with little customization.

The core idea behind Seotistics is to provide a data-informed approach that can help you understand the present and (possibly) the future of your website.

Important Warnings

Content Auditing can lead to many disasters and that’s one of the main reasons why you pay for quality.

Content Pruning is the practice of removing content to improve the performance of your website and also one of the most common causes of problems.

The reason why I included Crawl and Backlinks data is to avoid deleting pages that have key connections within your website.

Your pages form a graph, a connected structure where everything plays a vital role. Not really everything but a big part of it does.

Approach content auditing with a critical eye and be careful when deleting articles just before they get no traffic.

This also explains why I recommend using data from other channels to prevent eliminating highly-profitable articles.

Tips For Huge Websites

The goal of an audit is to take action afterwards. By recalling this key point, we can state that you don’t need to analyze the entire website.

You can take a sample of pages (like a representative 40%) to give advice on the entire website.

By representative I mean pages that can work as a reference/template for other pages on the website.

Whatever can add revenue, improve SEO and provide unexplored opportunities justifies this process.

If you don’t know what’s going on, any change in behavior can lead to action.

Recall the data-informed approach when dealing with audits and data analyses!

How Much Does A Content Audit Cost?

According to Expert Market, the minimum cost sits around $2,000 with a range that spans up to $30,000.

In my experience, this is completely true and reflects the truth for US websites that can afford to pay such amounts.

Don’t consider this amount as truthful for non-EN markets as you will hardly see that much money.

My pricing lies in the range of $2,000-$10,000 since I mostly work with US websites.